Over the last decade, the human gut microbiome has been linked to a plurality of health and disease conditions including mental health, cancer, and obesity to name a few1,2,3. Despite extensive research utilizing metagenomics for microbiome characterization, there are currently no regulatory-approved clinical microbiome diagnostic tests in the US, and only one sequencing based test with the CE-IVD designation in Europe. In fact, the French Society of Microbiology, through a publication in Le Monde, has advised against microbiome testing, primarily due to insufficient knowledge and the personalized nature of defining a “healthy” microbiome4.

Concurrently, direct-to-consumer (DTC) personal wellness services are becoming increasingly accessible despite challenges regarding their technical and clinical validity5,6,7. As our understanding of the microbiome structure-function relationship is still limited, our ability to make confident diagnostic or prognostic predictions based on an individual’s microbiome is limited.

Despite these challenges, associations between the gut microbiome and human health are often prominently featured by media outlets, leading many consumers to seek a better understanding of their own gut health. While citizen science projects like The Microsetta Initiative have offered consumers basic information about their gut microbiomes, there remains a demand for more actionable information about the status and implications of personal gut health8,9. In response, numerous commercial DTC microbiome testing services have emerged, providing consumers with profiles of their gut microbiome. Many of these companies extend their services beyond microbiome profiling by classifying microbes as pathogens or beneficial, comparing the profile of a consumer’s microbiome against a comparative population, providing an index of gut microbiome health, or offering recommendations for lifestyle changes, dietary changes and/or dietary supplements.

While these recommendations are often limited to dietary interventions, regulatory concerns regarding consumer protection have been raised10. Notably, there has been a technological shift from end-to-end diagnostic testing, traditionally confined to clinical settings and conducted by trained medical professionals, to DTC testing services like 23andMe. These services have introduced home testing kits that allow consumers (patients) to collect their own samples, ship them to a laboratory, and access the results without consulting a clinician. A primary concern is that these at-home tests do not undergo the same rigorous oversight in validating analytical performance as traditional medical diagnostic tests. Such validation is crucial to assure clinicians, patients, and regulators of the reliability and actionability of test results. This lack of validation and the implications may not be apparent to the consumer.

In the age of digital publishing, numerous citizen scientists, reporters, and microbiome researchers have submitted samples to different companies and published on their results11,12,13. While these case studies are often intriguing and provide some anecdotal insight, to our knowledge, a rigorous evaluation of the analytical performance has not yet been conducted. The National Institute of Standards and Technology (NIST) has developed a suite of candidate human fecal standards, confirmed to be homogeneous and stable via rigorous multi’omic analyses14,15. These materials have been designed to enable stakeholders to evaluate the impact of methodological variability on their microbiome measurements, both qualitatively and quantitatively. The fecal standards, being homogenous and stable, are ideally suited to assess the precision (reproducibility) of measurement workflows within and across laboratories.

In this study, we describe our approach to evaluating the performance of seven DTC microbiome testing services using a NIST-developed human fecal standard. Each service employed an NGS-based analysis workflow (16S rRNA gene amplicon sequencing or whole metagenome shotgun sequencing (WMS)) and provided a detailed report of analysis. We assessed the reproducibility of the results, both within and across these testing services.

As part of its mission to promote U.S. innovation and industrial competitiveness, NIST has been working with stakeholders in industry, academia, and other government agencies to develop standards to support the advancement and commercial translation of microbiome science. To this end, we wished to assess the current state-of-the-art with respect to DTC gut microbiome testing services. To achieve this, we deployed a NIST-developed human gut microbiome (human fecal) standard to assess the precision/reproducibility of seven DTC gut microbiome testing companies. For each of the seven companies, three tests/kits were ordered via the company’s website. Upon receipt of the (3 × 7 = 21) sample collection kits, we used a homogenized pool of human fecal material to serve as the test sample material for each collection kit. Collection procedures were followed according to instructions provided by each company (Table 1). Samples were placed into the company-provided shipping container and returned to the company for analysis. Results, in the form of company-specific reports, were received anywhere from 2 to 8 weeks following shipment of the sample. Taxonomic profiles were manually extracted from these reports and used for all subsequent analyses. It is also noteworthy that the companies were not informed of the ongoing assessment until after all the samples had been processed and final reports were received by NIST. Full details on materials and methods can be found in the Methods section.

The process of translating the composition of stool sample into a microbiome report is multifaceted. As there are currently no universally accepted best practices for these methods, each provider likely employs a unique and often proprietary workflow. Microbiome measurements are impacted by a vast number of methodological variables including (1) sample collection, storage, and shipping methods, (2) nucleic acid extraction techniques, (3) NGS-library preparation, (4) sequencing technology, and (5) bioinformatic analyses. Bias can be introduced at every step, and even minor changes in methodology can lead to significant differences in results. In this study we had limited knowledge of the workflows that were employed by each company; however, we were able to infer key differences in both sample collection and sequencing methodologies.

Table 1 shows a comparison between companies regarding some methodological variables likely to impact the result (Table 1). Notably, there was considerable variability in methods for sample collection, including both the sample type (whole bowel movement vs. used toilet paper) and the sampling implement. Furthermore, some companies instructed users to add the sampling implement to a buffer, or to resuspend the sample in a buffer and discard the implement, while others directed users to ship the implement back ‘neat’, enclosed in a secondary sterile container. None of the shipping packages included ice, so all samples were shipped under ambient conditions (the study was conducted in the spring). The exact transit times between shipping and receipt of samples were not reported by all companies. Typically, notification of sample receipt or processing was sent 5 days – 7 days after shipping.

Regarding sequencing methodologies, some companies used marker gene amplicon sequencing while others relied on WMS. Among those using amplicon sequencing, one utilized 16S rRNA gene amplicon sequencing of the V3-4 region and the other two used 16S rRNA gene amplicon sequencing of the V4 region; additionally, one company included sequencing of internal transcribed spacer (ITS) amplicon for fungal identification. The read length and depth of sequencing, which can impact the specificity and sensitivity of taxonomic identification, varied by company. Although a wide range of read depths were reported, the variability within a single company was generally narrower (Table 1). Read depth ranged from <2 × 104 to >1 × 107. Interestingly, higher read depths did not always correlate with a greater number of species identified. For example, the three replicates from company C each reported over 600 species with read depths between 2 × 104 and 3 × 104. In contrast, company D reported 95, 98, 99 species for their three replicates, each with read depths exceeding 1 × 107 reads. Although most details of read processing were not disclosed, we noted different cutoffs (ranging from 0.1% to 8 × 10−6 %) for reporting taxonomic assignment based on the taxonomic tables provided.

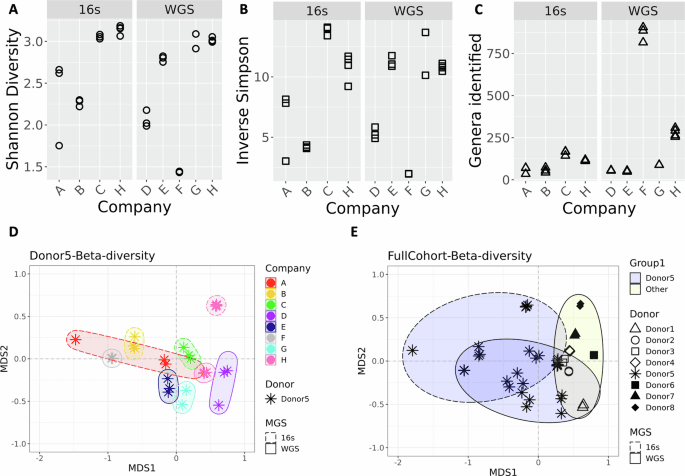

The process of generating the taxonomic tables from the data provided by each company required manual curation, as the reports came in varied formats (e.g., PDF, HTML). Additionally, a complete taxonomic profile was not part of Company A’s report but was obtained after sending a request to their help center. For comparison, NIST (referred to as company H) also performed metagenomic analyses on aliquots of the same material (donor 5) that was shared with the DTC companies. In addition, taxonomic data from 7 other unique donors (donors 1, 2, 3, 4, 6, 7, 8) were included in the NIST workflow to enable a comparison of the differences associated with biological variability (donor) vs. technical (methodological) variability. Although species-levels taxa were reported by each DTC company, all subsequent analyses at NIST were conducted using genus-level taxa assignments. A comparison of alpha diversity revealed a range of diversity values with no clear patterns based on read depth or sequencing method (Fig. 1A–C, respectively). Bray-Curtis dissimilarity was calculated to compare all replicates from each of the seven companies and NIST (company H) (Fig. 1D). Along with the alpha-diversity plots, the ordination plot can indicate inter-lab reproducibility. Tighter distribution of points in scatter plots (Fig. 1A–C) and on the ordination plot (Fig. 1D) suggest higher reproducibility for a company (e.g., Company F), while Company A showed poor reproducibility. Moreover, there was no distinctive clustering based on whether WMS or amplicon sequencing was used (WMS shown with solid lined, and 16S rRNA gene amplicon sequencing with dashed lined ellipses in Fig. 1D). One question raised was how the diversity observed for a single sample analyzed by multiple companies compared to biological diversity from different donors analyzed by a single workflow. A second Bray-Curtis dissimilarity index was calculated that included data from seven additional donors processed with the NIST workflow. Surprisingly, the diversity observed for samples from a single donor analyzed by different companies (asterisk in Fig. 1E, blue ellipses) appeared to be greater than or equal to that of biologically distinct samples (Fig. 1E, other shapes, yellow ellipse). This demonstrates that methodological variability can be greater than biological variability.

Plots show alpha-diversity calculated using A Shannon B Inverse Simpson indices and C for the total genera identified. Three replicates are plotted for companies A, B, C, D, E, and F; company G has 2 replicates and company H has 4. D Ordination plot showing beta-diversity based on genera for different companies processing samples from a single donor. Colors indicated different Companies; ellipses are drawn to further identify samples processed with the same Company. Ellipses with dashed lines indicates 16S amplicon sequencing was used; solid lines on the ellipses indicate WMS. E Ordination plot showing beta-diversity based on genera for a single donor processed by different companies (asterisk) and different donors processed by a single workflow (other symbols). The yellow ellipses indicate the cluster of other donors processed with a single workflow; blue ellipses indicate samples from a single donor. Similar to plot D, dashed lined ellipses denote 16S, solid denote WMS.

While Fig. 1E provides a visual representation of this variability, we also sought a more quantitative way to compare the methodological (between companies) and biological (between donors) variability. Figure 2, show a ratio of methodological to biological variance; a complete description of this analysis is available in the “Methods” section. Briefly, a Bayesian approach was used to determine the variation resulting from different methods being applied to the same samples (company analysis of Donor 5) and the variation resulting from a single method applied to multiple donors (NIST analysis of all 8 donors). This approach was applied to only the common taxa for this demonstration. The scatter cloud displayed in the figure represents 100 draws per sample. The sampling is reproducible (seed set), and we verified that subsampling does not materially alter the posterior summary statistics. Crucially, the full posterior summary (posterior mean and 95% credible intervals shown in red, blue, and black) is overlaid and remains the basis for inference. As shown in Fig. 2, only one genus (Haemophilus, shown in black) had a mean value and interval completely below 1, indicating there was less variation between methods than between donors for this genus. In contrast, 17 of the 18 taxa either had an interval completely above 1 (methodological variability exceeds donor) or had an interval that crossed 1 (similar variability between method and donor). This figure further supports the conclusion that methodological variability can be of the same magnitude as biological variability, and for some genera, methodological variability exceeded that observed between donors. Additionally, this figure reiterates that bias is often taxa-specific, an observation that is masked when summary metrics such as β-diversity and ordination plots are used.

For the 18 genera that were identified in at least 1 replicate by each company, we fit a Bayesian hierarchical model. The ratio of the two variance components (colored, solid points) and the 95% credible interval surrounding (error bars) it are plotted. To visually represent posterior uncertainty without overwhelming the figure, gray points represent a randomly subsampled 100 draws per taxon, displayed in the scatter-cloud. This ensures a representative spread of the posterior while preserving figure clarity. The red, dashed line designates 1 on the graph. An interval fully above 1 (red) indicates that the methodological variability is greater than biological variability with 95% confidence; an interval that straddles 1 (blue) indicates that there is no significant different between the two variance components with 95% confidence; and an interval that is fully below 1 (black) indicates that the methodological variability is less than the biological variability with 95% confidence.

In addition to comparing diversity metrics, the taxonomic composition was compared on a genus-by-genus basis to identify the genera common to all analyses. For this analysis, any taxa that was not given a specific genus designation was renamed unspecified, and these assignments were then grouped with any unidentified taxa that were noted in the report. While some companies provided information that allowed us to determine the percentage of unidentified reads, this was not true across all companies. In fact, the NIST analysis, based on Bracken, removed unidentified reads prior to calculating relative abundance. Therefore, while we were able to show this data for some companies, absence of the unspecified category in Fig. 3 does not indicate 100% of the taxa were identified but could indicate that the unidentified reads were dropped earlier in the process, prior to calculating relative abundance values for known taxa. Despite vast differences in read depths, it was hypothesized that the most common genera would be present in all samples, and less abundant genera would only be seen in samples with higher coverage. The number of identified genera ranged from 34 to 906, with a relatively narrow range for each single company (Fig. 1C). From all 9 analyses (7 companies and NIST WMS and 16S rRNA gene amplicon sequencing), a total of 1208 unique taxa were identified. Only 3 genera were found in all samples when comparing all taxonomic profiles (Supplementary Fig. 1). A single replicate from Company A had a distinctive taxonomic profile (Fig. 3B, and observed in Fig. 1D as the single red asterisk on the left), which when removed from the analysis, increased the number of common genera to 17. This accounted for less than 2% of the total genera identified, or between 2% and 43% for any given sample. In most cases, these common genera constitute a majority of the genera (>50% of the abundance for companies A, C, D, G, and both NIST analyses), or the majority of genus-level specified reads (companies B and F). Additionally, the conservation of identified genera between replicates varied, from as low as 6% for Company A to as high as 98%. Excluding Company A, the genera conserved between replicates from the same company accounted for at least 95% of the identified sample composition. This indicates that most variability between replicates from a single company occurs among taxa present at low abundance and highlights the importance of empirically determined read cut-offs for taxa inclusion in a report.

A The relative abundance of genera that were identified in all but one test. Each company is indicated by a letter, with replicate samples indicated by the number after the dash. B Relative abundance data for the one test sample (A-3) that was excluded from the overall analysis. All genera that were identified are included in the plot, C Relative abundance of genera found in common across replicates run by company A. The gray portion in all bars represents taxa that were not given a Genus level identification; this includes both taxa designated at a higher taxonomic level or that were listed as unidentified.

Replicate 1 from Company A had a significantly different taxonomic profile compared to the two other replicates from Company A and those from other companies (Fig. 3B). This egregious anomaly prompted further investigation. Compared to replicates 1 and 2 from Company A, replicate 3 had approximately half the number of reads (186 k compared to just over 400 k). This might have contributed to its distinct profile, as only 6 genera were common between replicate 3 and replicates 1 and 2, corresponding to 10% of the total genera and abundance for these replicates. In contrast, replicates 1 and 2 shared 69 out of 70 and 71 genera, respectively. Notably, the other 28 genera in replicate 3 were not unique but were identified in at least one other replicate across all the companies. It is also worth noting that the percentage of unspecified reads in replicate 3 comprised over 50% of the sample, whereas the unspecified designation accounted for just over 20% in replicates 1 and 2. These discrepancies raise concerns not just because the profile was distinct from other replicates originating from the same sample, but also because it apparently passed all quality control checks from the company, resulting in a report being generated and sent back to the customer.

Figure 3 provides a qualitative comparison of the relative abundances for different common taxa reported by each company. To quantify the level of agreement between companies, we examined the results on a taxa-by-taxa basis using a Bayesian approach that’s common in interlaboratory studies. The same 18 genera from Fig. 2 were analyzed, and the consensus value (Fig. 4 and Supplementary Fig. 2, yellow horizontal line) and corresponding uncertainty (Fig. 4 and Supplementary Fig. 2, shaded yellow region) from all nine measurements (7 companies and 2 methods from NIST) were determined. Depending on the genera being evaluated a range of comparability was observed (Fig. 4, Supplementary Fig. 2). Of note, no method reported concordance with the consensus relative abundance range for all 18 taxa analyzed. Rather, for any given method, the number of taxa where the relative abundance overlapped with the consensus range ranged from 10 to 16 taxa. In general, companies observed a genera dependent difference for outliers, reporting higher relative abundances for some and lower for others. Company A and H were the exceptions. Two genera (Blautia and Roseburia) from Company A had values outside of the consensus range, and the relative abundance for both genera was lower than the consensus ranges. For Company H, regardless of sequencing method (16S or WMS), when relative abundance values were outside of the consensus range, they were higher than the consensus range. From a genera specific perspective, Streptococcus was the only genera where the relative abundances for all 9 methods overlapped with the consensus range. Roseburia had the least agreement with only 3 of the 9 methods reporting relative abundance values that overlapped the consensus range. Whether measurement variability was greater than or indeterminate from biological variability (Fig. 2) did not appear to be a predictor of agreement for a given genus. An average of 2.9 outliers were observed for genera where methodological variability was greater than biological (Supplementary Fig. 2), and an average of 3.1 outliers were observed for genera where biological and methodological variability were indistinguishable (Fig. 4). It’s important to note, that these results are not indicative of accuracy since we do not know the actual composition of these samples. Instead, this analysis shows the agreement (or lack thereof) between companies (methods) for a given taxa and provides a means to quantify the level of that agreement.

Hierarchical Bayesian random effects model with a dark uncertainty was determined to compare individual company findings (A-G) and NIST findings (H1-WGS, H2-16S) to a consensus value (shown by yellow line), range (shown by yellow shaded region) for the 10 genera in Fig. 2 with variance ratios that straddle 1. The O’s indicate the average value for each company using 16S analysis and the X’s indicate the average value for companies using WMS. Thick gray lines represent the variability for each company, and the thin gray extensions capture additional variability attributed as dark uncertainty (further explained in the “Method” section).

Relative abundance for select genera A Bacteroides B Bifidobacterium C Clostridium (to include Clostridioides) D Faecalibacterium and E Roseburia were plotted compared to reported comparators from company G as a gray dashed line and from company E as a shaded yellow region. The gray dashed line represents the average from the American Gut Project reported by company G as a comparator. The yellow shaded region indicates the IQR used to define the healthy population as a comparator used by company E. The symbols indicated metagenomic analysis: X’s designate companies using 16S amplicon sequencing and O’s designate WMS analysis. Three replicates are shown for each Company except for Company G which only had 2 successful samples and company H for which 4 samples were analyzed by both 16S and WMS resulting in 8 data points.

Our rigorous assessment of seven microbiome testing companies has spotlighted the systemic issue of poor comparability that plagues the industry. The primary contributor to this outcome is methodological variability. The use of a homogenous stool reference material enabled a systematic evaluation without the confounding factors of biological or composition heterogeneity that may be found in a traditional stool sample. The significant impact of methodological variables on the results of microbiome metagenomic measurements has been demonstrated by us and others, and DTC microbiome testing companies are not immune to these sources of bias14,17,18,19,20. Consistent with reported findings, reproducibility within a locked down workflow (as demonstrated by within company comparisons) tend to be very good. It is important to emphasize that reproducibility is one of many factors that should be considered when evaluating microbiome analyses. While company F appears to have exceptional reproducibility (Fig. 1) it also has a very high number of unspecified reads reported as part of the workflow (Fig. 3). None of the features evaluated should be viewed in isolation, and transparency of these features will be important for consumers to make informed decisions regarding these tests.

Another important factor to emphasize with this study, is that the stool reference material does not represent an absolute “ground truth”. Consequently, this study cannot definitively determine which results are closest to the actual microbiome composition, nor was that the goal of this study. Nonetheless, access to a homogenous and standardized material, comparable to the complexity to a real-world sample, is sufficient for evaluating the precision of the sample processing workflows. Overall, this study revealed that the impact of methodological variability (between company comparisons) was on the same order of magnitude as biological variability (samples taken from 8 distinct donors). These findings have major implications for the comparability of tests on a single individual from two different companies and highlights the need for caution in interpreting and acting on these test results, especially in the absence of validated diagnostic evidence. Of note, despite the promising potential of the gut microbiome in human health, as evidenced by recent drug approvals21,22,23, the diagnostic capabilities of these tests remain largely underdeveloped, partly due to the challenge demonstrated in this study.

Another crucial aspect we considered was the validity of the diagnostic and prognostic implications derived from these tests. Questions arise as to how a testing service determines whether a patient’s microbiome is healthy or unhealthy, what the relevant diagnostic and prognostic biomarkers are, and how these biomarkers were identified and validated. These questions are significant, as the test results may lead consumers to make potentially unwarranted or unsafe lifestyle changes. Many of the results are reported with respect to an average or “healthy” microbiome; however, defining a healthy microbiome remains a challenge due to the heterogeneity of the human population, confounders, and the possibility of multiple healthy microbiome definitions. Even among the DTC companies evaluated, there was no standard practice for selecting a comparative “healthy” population. In some cases, companies used data generated in-house, either by measuring a cohort specifically recruited to serve as the comparative population or by aggregating the data from prior customer’s test samples. Alternatively, some companies look to previously constructed datasets such as that from American Gut or the Human Microbiome Project (HMP); both methods raise concerns. A benefit of using data generated in-house is that it was likely collected following the same workflow being used for the test samples, which should reduce the impact of methodological variability as reproducibility within a workflow is generally very good. However, little information was given on the demographics of these comparative populations, raising questions about how representative they are. In contrast, comparisons using data from previous efforts such as the American Gut, may provide more demographic information and/or a larger, more representative dataset than possible for an individual company. However, this comparison is fraught. As detailed above methodological variability is a major hurdle for data comparability. Unless the DTC company is following the same protocol used to generate the comparative population data, the observed differences are unlikely to be due entirely to biology, but rather a combination of biology and methodology, or perhaps methodology alone. This point is illustrated in Fig. 2 where the variance related to methodology is only smaller than biology for 1 out of 18 genera analyzed. It is also worth noting, when we compare the consensus values from this study to the healthy volunteer data, some of the ranges/averages overlap but others do not (Supplementary Table 1). Together these figures emphasize the lack of reproducibility between methods thus preventing comparability between studies

In general, the health recommendations of many DTC microbiome testing companies focus on healthier eating habits, which may seem innocuous and unlikely to cause direct harm to the consumers. However, it is crucial to recognize that a significant portion of the individuals who seek these tests are often those with chronic gut conditions, who may have struggled to find effective treatments. For these individuals, the variability in test results or the lack of expected outcomes from the recommendations provided, could not only lead to a loss of consumer faith in the science but also result in the delay of appropriate medical care. Additionally, some companies recommended that customers start taking costly supplements (e.g., probiotics) that are sold by the same company and for which there is very little clinical evidence for efficacy.

Given that adopting a single methodological workflow across the entire microbiome industry is unrealistic, there are several steps that could be taken to improve result transparency and interpretation. There are two primary areas to address with respect to DTC testing (1) clinical validity and (2) analytical performance. With respect to the first, the rapidly expanding field of microbiome research is constantly improving our understanding of the associations between health and the microbiome, but much of the data is likely correlative rather than causative. Without substantial causative evidence, the clinical validity of these tests will remain a challenge. While not a solution, inclusion of peer reviewed literature citations to support any claims to health status, or designation of a microbe as beneficial or harmful, would provide consumers (and clinicians) with a means of understanding the legitimacy of their results.

There are many readily available resources for addressing analytical performance. An ideal standard that enables both accurate and precise testing does not yet exist; however, as demonstrated by this study, inclusion of standard fecal material could be used to determine the minimum number of reads required for reproducible results and other minimum standards to ensure result validity. Additionally, while on a smaller scale than the hundreds of organisms present in a stool sample, mock communities of 10-20 common gut microbes are available from several commercial outlets that enable end-users to assess the accuracy and precision of their microbiome measurements. Although beyond the scope of this discussion, in addition to the reference materials mentioned here, there are numerous publications on validating analytical performance of a metagenomic workflow24,25,26. The adoption of reference materials and the reporting of the analytical performance gleaned from these materials, would help customers and other stakeholders (including regulatory bodies) understand and characterize bias in a given workflow. The industry should consider developing guidelines, such as a consensus document on minimum requirements, with input from testing companies and other stakeholders. Similar documents, such as MIQE, EMMI, AsqMI, and STORMS have been developed for other high complexity molecular diagnostic assays27,28,29,30. Such a document would support test validity and bolster consumer confidence for DTC gut microbiome testing, and indeed there is support for this approach with a recent publication co-authored by an international expert panel of physicians and researchers31

As part of the Microbiome Program, researchers at NIST have undertaken the challenge of producing a whole stool gut microbiome reference material. While the final reference material will be comprised of pooled donor material, as part of early investigations NIST obtained homogenized material from individual donors. Donor material was obtained from multiple volunteer donors by The BioCollective (Denver, CO, USA). All whole stool samples were collected after informed consent under approved IRB protocols at The BioCollective. All work conducted at NIST was reviewed and approved by the U.S. National Institute of Standards and Technology Research Protections Office. All ethical regulations relevant to human research participants were followed. Briefly, multiple stool samples from a single donor were combined, homogenized with molecular grade water to create a slurry with a final concentration of 100 mg/mL. This material was aliquoted, lyophilized, and stored at -80 ˚C until use. Having a relatively large quantity of homogenized stool gave NIST the confidence that any deviations seen in the results were due to bias introduced during the sample processing workflow and not biological differences between samples. A single aliquot of lyophilized stool was not sufficient as starting material; therefore, to further ensure a homogenous starting sample, multiple aliquots were pooled in a single 50 mL conical tube, combined with water to create a semi solid material consisting of 540 mg of stool for sampling. This pooled material was used to inoculate all tests within 1 h of preparation.

NIST analysis of the homogenized whole stool sample

In addition to the analyses provided by the service providers, the standardized fecal sample was also analyzed in the NIST lab using in-house DNA extraction, 16S rRNA and WMS workflows, to provide a better understanding of how reproducibility within a service provider compared to reproducibility within our own lab. Four replicate DNA extractions were carried out using the Zymo Research Quick-DNA Fecal/Soil microbe miniprep extraction kit (cat # D6010) per protocol with the vortex genie adapter for bead beating carried out for 45 mins. WMS and 16S rRNA sequencing library preps were carried out on the same DNA extract. 16S rRNA library prep was carried out as follows: 12 ng of genomic DNA was amplified in a 25 µL reaction comprised of 2x Kapa HiFi Master Mix (Roche cat # KK2601/2), 1 µL of 10 µmol/L V4 515 F/806 R primers with Illumina adapters (5′- TCGTCGGCAGCGTCAGATGTGTATAAGAGACAGGTGYCAGCMGCCGCGGTAA-3′, 5′- GTCTCGTGGGCTCGGAGATGTGTATAAGAGACAGGGACTACNVGGGTWTCTAAT-3′), under the following conditions: 95.0 °C (3 min), and 18 cycles of amplification 98.0 °C (30 s), 55 °C (15 s), 72 °C (20 s), and a final extension at 72 °C for 5 min. 16S amplification products were purified with SPRIselect beads (Beckman Coulter cat # B23317/B23318/B23319) in a 0.8:1 (bead to product) ratio. The cleaned 16S products were indexed using IDT® for Illumina® DNA/RNA UD Indexes with 8 amplification cycles, but the same thermocycling conditions used for the 16S amplification. The indexed 16S amplicons were purified with SPRIselect beads in a 1:1 (bead to product) ratio. Purified indexed amplicons were pooled in equal quantities and adjusted to a 4 nM pool for sequencing preparation following the MiSeq Systems Denature and Dilute DNA Libraries Guide, Protocol A (Document # 15039740 v10, February 2019).

WMS library prep was carried out using Covaris focused-ultrasonication for fragmentation followed by NEB for indexing. 10 µL of the DNA extract (~500–700 ng of DNA) was added to a 120 µL of TE in a microtube AFA Fiber Pre-slit Snap Cap 6 ✕ 16 mm tube (Covaris Cat#: 520045). Samples were fragmented using the following settings: Intensity: 10%; Duty cycle: 10%; Cycle/burst (CPB): 100; Time: 1 min. Fragmented DNA was analyzed using the Tapestation and average peak size was estimated to be 280 bp. Fragmented DNA was indexed using the New England Biolab (NEB) NEBNext Ultra II DNA Library Prep Kit for Illumina with NEBNext Multiplex oligos for Illumina (NEB cat # E7645S/L; E6440) following the instruction manual (V6.1_5/20) with 50 µL of fragmented DNA as input; this corresponded to 200–300 ng of DNA input. Purified indexed samples were pooled in equal quantities and adjusted to a 4 nmol/L pool for sequencing preparation following the MiSeq Systems Denature and Dilute DNA Libraries Guide, Protocol A (Document # 15039740 v10, February 2019). Both 16S and WMS sequencing libraries were run using a MiSeq Reagent Kit v3 600-cycle cartridge (MS-102-3003) with pair-end reads (raw reads are be available data.nist.gov32 and BioProject ID PRJNA1364055).

16S reads were analyzed in R (4.1.0) using CutAdapt33 for primer trimming and DADA2 (1.20.0)34 with the silva database35 (v138) for taxonomic classification. The complete R code can be found at data.nist.gov32 (16S_Analysis/Dada2-16sAnalysis2024).

WMS read quality was assessed with FastQC. Reads were trimmed using bbduk (bbmap 38.90) with the following parameters: t-30, qtrim = r1, trimq = 30, minlen = 100, maxns = 0. Trimmed reads were taxonomically profiled using Kraken236 followed by bracken at the genus level37 with default parameters against the prebuilt GTDB-tk database (r89)38,39

WMS was also conducted on the 7 additional donors of the candidate fecal reference material to serve as comparators for assessing the magnitude of methodological variability compared to biological (donor) variability. Two replicate DNA extractions from homogenized whole stool from all 8 unique donors (the donor used for all previous analyses plus 7 additional donors) was carried out using the Qiagen PowerSoil Pro Kit. WMS library preparation was done using Illumina NexteraXT followed by sequencing on the Illumina HiSeq X platform (raw reads are available at data.nist.gov32). WMS reads were profiled using the Kraken and bracken workflows as described above.

Selection and preparation of DTC gut microbiome tests

NIST conducted a web search for “at home direct to consumer microbiome testing kits” and selected 7 companies for inclusion in the study; 3 kits were ordered from each company to assess the intra-and inter-laboratory precision (reproducibility). A NIST employee created an account for each company using a personal email account so that the companies were blinded to the experiment at the time of testing.

Each test kit came with instructions, collection devices, sample containers, and pre-paid shippers. To the extent possible, the sampling instructions were followed per the manufacturers’ instructions. There were two notable deviations from the sampling instructions. First, some companies provided proprietary collection devices to support sampling directly from a bowel movement, while others instructed users to sample from used toilet paper; for this experiment all samples were taken directly from the 50 mL conical. Second, some companies provided instructions to sample multiple sections on the bowel movement, which was not possible with our sample type. As mentioned above, all kits were inoculated within 1 h of preparing the sample and then immediately packaged in the prepaid shippers and dropped off for shipping at the respective location (e.g., USPS, FedEx) as designated by the shipping label.

Six of the 7 service providers produced a report of analysis for each kit (n = 3) sent. One service provider produced 2 reports and reported a sample failure. An additional sample was sent, and this also failed their sample QC (the specific type of failure was not disclosed) and a third attempt was not made. Provider reports varied in content and format necessitating manual curation of the taxonomic data from each report to produce a consistent format. In addition to creating a consistent file and format, some datasets provided taxonomic resolution down to the species or even strain level whereas other taxa were identified at a higher order, for example only down to the family level. A genus level taxonomic classification was chosen to facilitate comparisons between datasets; therefore, the relative abundance of all species and strain level designations were summed to the genus level. The relative abundances for unclassified taxa and taxa where a specific genus designation was not given were summed and given an unspecified designation. Taxonomic tables generated from the NIST 16S and WMS analysis were put in the same format. All resulting tables were used as the input files for future analysis conducted in R. The genus table (tab 1, genera and relative abundances for all samples) and accompanying metadata (tab 2) are available as a Supplementary data file. All other intermediate tables and the complete code for analysis and figure generation can be found at data.nist.gov32. We are unable to share the actual reports in a blinded fashion so this version of the data will not be made publicly available.

Determination of variance ratios for common genera

A The information from the Companies can be considered in the framework of a typical one-way nested ANOVA with the equation:

where \({y}_{{ij}}\) represents observed relative abundances, \(\mu\) is the overall mean, \({\theta }_{i}\) denotes the effect of the \(i\)-th Company, and \({\epsilon }_{{ij}}\) accounts for residual variation among replicates nested within each Company. We can use the information from all Companies to find a reasonable estimate of methodological variability (\({\sigma }_{a}^{2}\)) and information from Company H to find a reasonable estimate of biological variability (\({\sigma }_{e}^{2}\)). In order to verify the visual of the MDS plot in Fig. 1 quantitatively, we can perform an inferential analysis on \({\sigma }_{a}^{2}/{\sigma }_{e}^{2}\) using a hierarchical Bayesian model with priors that are generally noncommittal, but stay within the known restrictions of the data40,41,42. The complete code to run this model in R is available at data.nist.gov32.