On Monday, April 6, 2026, the decentralized social network Bluesky experienced intermittent service disruptions, a routine issue for any tech platform. What set this outage apart was the immediate reaction from users who blamed it on the platform’s developers allegedly relying on AI-assisted 'vibe coding'—a practice of using AI tools to generate code with minimal human oversight. Within hours, Bluesky’s feeds were flooded with memes, critique, and outright mockery targeting the development team, despite the company attributing the issue to an 'upstream service provider.' The episode underscores a growing divide in the tech community: while some developers embrace AI coding tools as a productivity booster, many end users and critics view them as a shortcut that invites sloppiness and unreliability. This tension reflects broader anxieties about AI’s role in software development, from security risks to job displacement fears.

- Bluesky’s Monday outage was met with widespread accusations blaming 'vibe coding' despite no evidence linking the issue to AI tools.

- Bluesky developers have openly discussed using AI coding tools like Claude Code, sparking backlash from users who oppose AI integration.

- The debate highlights a divide between developers who see AI as a tool and skeptics who view it as a threat to software quality.

Why the Bluesky Outage Became a Lightning Rod for AI Skepticism

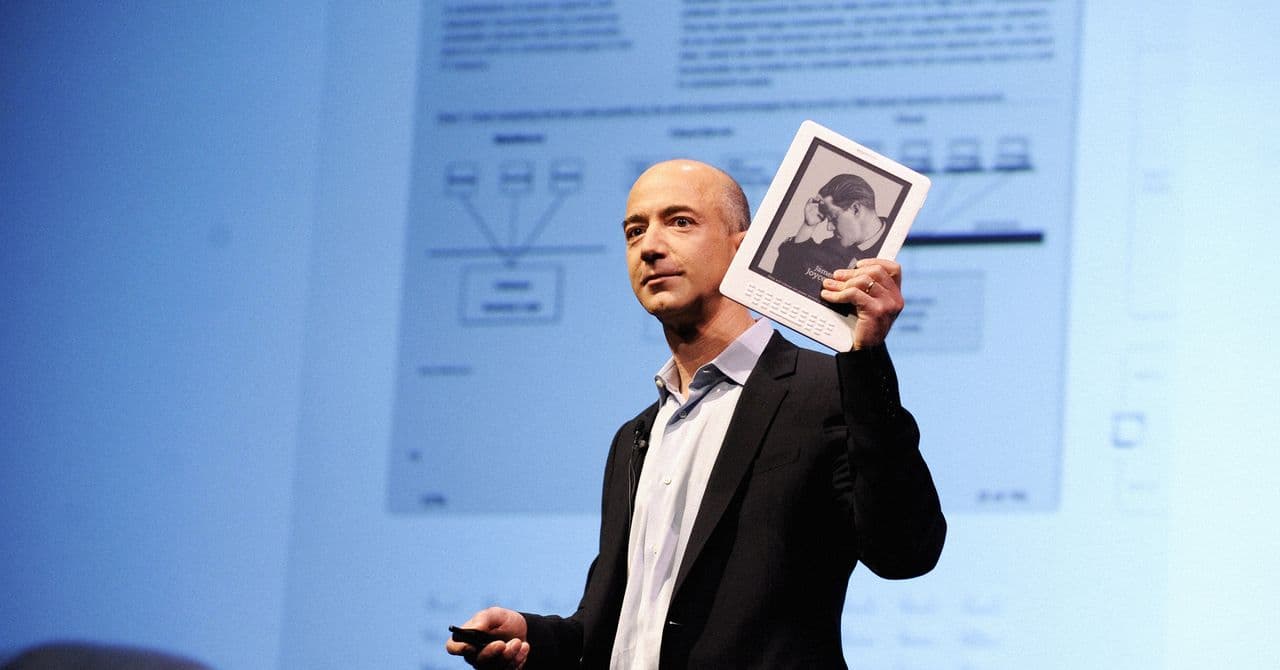

The Bluesky development team’s admission that they use AI tools—including Anthropic’s Claude Code—set the stage for Monday’s backlash. Jay Graber, Bluesky’s founder and Chief Innovation Officer, confirmed in late March 2026 that "Bluesky is made with AI," with engineers and even non-engineers using Claude Code. This transparency, while intended to showcase innovation, instead fueled skepticism among users who associate AI-assisted coding with poor-quality outcomes. Jeromy Johnson, a Bluesky technical advisor known as "Why" on the platform, went further in February, stating that "in the past two months, Claude has written about 99% of my code." Such statements, while framed as a testament to AI’s capabilities, were interpreted by many as reckless abandon of traditional coding practices.

The Attie Project: AI Coding Tools Beyond the Core Platform

The controversy deepened on March 28, 2026, when Bluesky announced Attie, a side project that allows users to build custom feeds by chatting with a Claude Code-powered bot. While Attie was explicitly described as a tool to "help you create custom feeds without having to know how to code," critics saw it as a dangerous step toward normalizing AI-generated software. Bluesky’s response—that the goal was to "give people greater control"—failed to quell concerns, particularly among users who had migrated from Elon Musk’s X (formerly Twitter) in 2024 under a promise that Bluesky would not use posts to train AI models. The disconnect between these assurances and the company’s embrace of AI tools underscored a growing mistrust among a vocal segment of the user base.

We hear the concerns about AI. Our goal is to use this technology to give people greater control, not to generate content. Attie uses AI to help you create custom feeds without having to know how to code.

The Rise of 'Vibe Coding' and Its Controversial Reputation

The term "vibe coding" emerged in tech circles over a year ago to describe a controversial practice: using AI tools to generate code with little to no understanding of how it works. Originally derided as a tool for amateurs or non-coders, the phrase has since been repurposed to critique any use of AI in software development, regardless of the developer’s expertise. This broad-brush characterization ignores key distinctions—experienced developers often use AI as a sophisticated autocomplete tool, relying on their own knowledge to review, refine, and test the output. Paul Frazee, Bluesky’s CTO, emphasized this point in a March 2026 thread: "The Bluesky team maintains the same review, red-teaming, and QA processes that we always have. AI coding tools have been proving useful, but haven’t changed the fundamental practices of good engineering." Yet the stigma persists, fueled by high-profile AI failures, such as Anthropic’s March 2026 client source code leak, which some users erroneously blamed on 'vibe coding' despite the company attributing it to human error in deployment.

AI Coding Tools: Boon or Boogeyman?

Proponents of AI coding tools argue that they enhance productivity by handling repetitive tasks, generating boilerplate code, and even suggesting optimizations. Boris Cherny, an Anthropic engineer, defended his team’s reliance on Claude Code in a post-leak statement, noting that the tool generates "pretty much 100% of our code." Similarly, Bluesky’s Johnson described AI as a transformative force in his workflow, reducing the time spent on mundane coding tasks. However, critics point to real-world examples of AI-generated code gone wrong: a six-hour Amazon outage in early 2026 was blamed on AI-assisted debugging, and automated coding agents have been linked to irreversible file deletions. Security risks are another major concern. A 2025 report by Stanford University found that AI-generated code contains vulnerabilities 30% more often than human-written code, raising alarms about the potential for backdoors or exploits in widely deployed software.

The Human Factor: Why Software Glitches Persist No Matter the Tool

Despite the finger-pointing at AI, the truth is that software glitches and service outages predate AI coding tools by decades. The 2021 Facebook outage, which lasted nearly six hours and impacted 5% of global internet traffic, was caused by an errant configuration change—not AI. Similarly, the 2024 CrowdStrike update that crashed Windows systems worldwide was the result of a human error in testing, not automated code. Frazee, Bluesky’s CTO, echoed this sentiment in a March 2026 post: "On a personal level, I’ve been a software engineer since I was 12. I joke about the quality of my code, but the reality is that I take it incredibly seriously. The source of those jokes is humility to how difficult it is to write complex software and avoid bugs, or outages." This complexity underscores the folly of blaming every tech failure on AI tools alone. Even the most advanced AI systems are only as good as the humans who design, deploy, and monitor them.

The Cultural Divide: Developers vs. Users in the AI Debate

The Bluesky outage debate reveals a stark cultural divide within the tech industry. On one side are developers like Johnson and Frazee, who see AI tools as a natural evolution of programming—akin to adopting a new IDE or debugging tool. On the other are users and critics who view AI integration as a step backward, associating it with laziness, unreliability, and a disregard for craftsmanship. This divide is particularly pronounced among Bluesky’s user base, many of whom migrated from X in search of a less algorithmically driven, more transparent platform. Randi Lee Harper, a Bluesky user, articulated the tension in a post: "There’s an actual conversation to be had about AI-assisted coding and being a software developer that architects more complex systems, and where AI can be incredibly useful. But it’s impossible to have that conversation when folks not in tech jump in saying ‘AI is bad, always.’"

There’s an actual conversation to be had about AI-assisted coding and being a software developer that architects more complex systems, and where AI can be incredibly useful. But it’s impossible to have that conversation when folks not in tech jump in saying ‘AI is bad, always.’

The Future of AI in Software Development: Collaboration or Contention?

As AI tools like GitHub Copilot, Amazon CodeWhisperer, and Anthropic’s Claude Code become more sophisticated, their role in software development will only expand. The question is whether the tech industry can bridge the gap between developers who see AI as a collaborative tool and users who view it as a threat to quality. One potential solution lies in transparency: companies adopting AI tools could publish clear guidelines on how they’re used, emphasizing human oversight and rigorous testing. Bluesky’s approach—acknowledging AI use while maintaining traditional engineering practices—may offer a middle ground. However, the backlash over the Attie project suggests that even well-intentioned AI integration will face resistance from users who prioritize control and transparency over innovation. The industry must also address the genuine concerns about security and reliability, investing in tools to detect AI-generated vulnerabilities and ensuring that human developers remain accountable for the code they deploy.

Lessons from Past Tech Failures: Why Context Matters in the AI Blame Game

History shows that tech outages are rarely the result of a single factor, and AI is no exception. The 2017 GitLab database outage, which erased six hours of production data, was caused by a misconfigured backup system—not AI. The 2020 Twitter hack, which compromised high-profile accounts, stemmed from social engineering, not automated code. Even the 2023 LastPass breach, which exposed millions of passwords, was traced to a developer reusing a password—not AI-generated vulnerabilities. These examples highlight a critical truth: blaming every tech failure on AI is as reductive as attributing every success to human ingenuity alone. The real issue is the lack of nuance in how we discuss technology’s role in our lives. As AI tools become ubiquitous, the tech community must foster a more informed dialogue—one that acknowledges both the opportunities and the risks, without defaulting to knee-jerk reactions.

Key Takeaways: What the Bluesky Outage Reveals About AI in Tech

- Bluesky’s recent outage was blamed on 'vibe coding' despite no evidence linking the issue to AI tools, reflecting broader skepticism about AI in software development.

- Bluesky developers have openly discussed using AI tools like Claude Code, sparking backlash from users who oppose AI integration, even for experimental projects like Attie.

- The debate over AI coding tools ignores key distinctions between amateur 'vibe coding' and experienced developers using AI as a productivity enhancer with proper oversight.

- Historical tech failures show that glitches are rarely the result of a single factor, and blaming AI without evidence risks oversimplifying complex issues.

- The cultural divide between developers who embrace AI and users who distrust it highlights the need for transparency and education in the tech industry.

Frequently Asked Questions

Frequently Asked Questions

- Is 'vibe coding' the same as using AI coding tools?

- No. While 'vibe coding' originally referred to amateurs using AI to generate brittle code without understanding it, many experienced developers use AI tools as a sophisticated autocomplete to enhance productivity while still relying on their own expertise to review and test the output.

- Did Bluesky’s outage actually happen because of AI tools?

- No. Bluesky attributed the outage to an 'upstream service provider,' but many users blamed it on AI-assisted 'vibe coding' anyway. The incident highlights how quickly tech users assume the worst about AI when problems arise.

- Should companies be transparent about using AI in their development process?

- Transparency can help build trust, but it may also invite backlash from users who oppose AI integration. Companies adopting AI tools should clearly communicate their role, emphasize human oversight, and invest in security measures to address user concerns.