NVIDIA has officially unveiled DLSS 5 at GTC 2026, marking the next evolutionary leap in its AI-driven neural rendering technology. Scheduled for a Fall 2026 launch alongside the highly anticipated RTX 50 Series GPUs, DLSS 5 promises to transform PC gaming visuals by introducing real-time photoreal lighting and material rendering through advanced artificial neural networks. Unlike prior DLSS iterations focused on performance optimization or frame generation, DLSS 5 aims to enhance visual fidelity itself—infusing game frames with hyper-realistic details such as lifelike skin textures, fabric sheens, and dynamic lighting interactions while maintaining the creative intent of game developers. The technology represents a fundamental shift from traditional rendering techniques, leveraging deep learning to synthesize visual enhancements that were previously computationally infeasible in real time.

- DLSS 5 launches Fall 2026 with RTX 50 Series GPUs, promising photoreal lighting and materials via real-time AI neural rendering.

- Technology uses game-provided color and motion vectors to produce deterministic, temporally stable frames with enhanced realism.

- Early demos required dual RTX 5090s, but NVIDIA asserts the final version will run efficiently on a single GPU.

- Developers gain granular control over intensity, color grading, and masking to preserve artistic vision.

What Is DLSS 5? A New Paradigm in Real-Time Neural Rendering

DLSS 5 is not merely an incremental update to NVIDIA’s Deep Learning Super Sampling (DLSS) technology—it is a foundational reimagining of how real-time graphics are rendered and enhanced. Established in 2018 with the launch of the RTX 20 Series, DLSS began as a temporal upscaling technique that leveraged AI to reconstruct higher-resolution frames from lower-resolution inputs, thereby boosting performance without sacrificing image quality. Subsequent iterations introduced frame generation (DLSS 3) and AI-based ray tracing denoising, further elevating visual fidelity and smoothness. However, DLSS 5 transcends these capabilities by embedding a real-time neural rendering model directly into the graphics pipeline.

At its core, DLSS 5 utilizes an AI model trained on vast datasets of photorealistic 3D scenes to understand semantic elements—such as skin, hair, fabric, translucent materials, and light interactions—and apply this knowledge to each rendered frame. The system ingests per-frame color data and motion vectors, processes them through the neural network, and outputs a frame that exhibits enhanced lighting, material properties, and micro-details that closely resemble real-world physics. Crucially, NVIDIA emphasizes that this process is "deterministic and temporally stable," meaning the AI’s enhancements remain consistent across frames, avoiding flickering or artifacts that could disrupt gameplay immersion.

From Temporal Upscaling to Neural Synthesis: How DLSS 5 Differs from Previous Versions

To appreciate the magnitude of DLSS 5’s innovation, it is essential to contrast it with its predecessors. DLSS 1.0 relied on traditional upscaling with AI reconstruction, a process that primarily benefited performance rather than visual quality. DLSS 2.0 introduced a more advanced temporal upscaling model that improved image sharpness and reduced artifacts, while DLSS 3.0 added frame generation technology—effectively doubling frame rates by inserting AI-generated frames between rendered ones. DLSS 4.0 further refined ray tracing performance with AI denoising, making path-traced lighting more viable in real time.

DLSS 5, by contrast, abandons the notion of merely reconstructing or interpolating frames. Instead, it synthesizes new visual data by predicting how light should interact with surfaces based on learned patterns from real-world scenes. For example, when rendering a character’s face, the AI model can infer the subtle subsurface scattering in skin, the sheen on wet fabric, or the complex reflections on metallic armor—details that are often omitted in traditional real-time rendering due to computational constraints. This approach aligns with NVIDIA’s broader strategy to use AI to bridge the gap between offline cinematic rendering and real-time game graphics, a goal that has long been a holy grail in the industry.

How DLSS 5 Works: The Technical Backbone of AI-Enhanced Realism

While NVIDIA has not disclosed the full technical specifications of DLSS 5, several key operational details have emerged from its GTC 2026 presentations and developer documentation. The technology operates as a post-processing layer that sits between the game engine’s initial frame render and the final display output. Here’s a breakdown of the core components and workflow:

Input Data: Color, Motion, and Semantic Context

DLSS 5 relies on two primary inputs provided by the game engine: color buffers and motion vectors. The color buffer contains the raw pixel data of the rendered frame, while motion vectors describe how each pixel moves between frames—a critical component for temporal stability. These inputs are fed into a neural network that has been pre-trained on a diverse dataset of high-fidelity 3D scenes, encompassing various materials, lighting conditions, and environmental scenarios.

Neural Rendering Model: Understanding and Enhancing the Scene

The AI model at the heart of DLSS 5 is a deep convolutional neural network, likely incorporating transformer-based architectures given the complexity of scene understanding required. This model is trained to identify semantic categories within the scene—such as skin, hair, metal, glass, fabric, and organic materials—and predict how light should interact with these surfaces in real time. For instance, the model can determine that a character’s wet hair should exhibit specular highlights and that translucent objects like leaves or jelly should refract light in specific ways. This semantic awareness allows the AI to apply photorealistic enhancements that go far beyond simple upscaling or denoising.

Output: Deterministic, Temporally Stable Frames with Enhanced Fidelity

The output of DLSS 5 is a frame that retains the structural integrity of the original render but with augmented lighting, material properties, and micro-details. NVIDIA emphasizes that the process is deterministic, meaning the AI’s enhancements are consistent and reproducible across multiple runs, which is essential for multiplayer gaming and competitive esports where frame consistency is paramount. Temporal stability ensures that enhancements do not flicker or shift unpredictably between frames, preserving the smoothness and immersion of gameplay.

Developer Controls: Balancing Realism and Artistic Intent

One of the most significant criticisms leveled at earlier AI-enhanced rendering technologies was their tendency to alter the intended artistic direction of a game. To address this, NVIDIA has integrated several developer controls into DLSS 5. These include intensity sliders to adjust the strength of the neural rendering effects, color grading presets to fine-tune the tone and mood of the output, and masking tools to selectively apply enhancements to specific elements within a scene. For example, a developer could choose to enhance only metallic surfaces while leaving organic materials untouched, or apply a warmer color grade to interior scenes while maintaining cooler tones outdoors. This level of customization ensures that DLSS 5 can adapt to the unique aesthetic of each game rather than imposing a generic "AI filter" look.

Hardware Requirements and Performance: Early Demos vs. Consumer Reality

The unveiling of DLSS 5 at GTC 2026 was accompanied by eye-catching demonstrations that showcased the technology’s potential—but also raised questions about its practicality. According to reports from attendees, the initial demos were running on a dual-GeForce RTX 5090 configuration, with one GPU dedicated solely to running the DLSS 5 neural rendering model while the other handled the game’s native rendering. This setup is far from typical consumer hardware and highlighted the significant computational demands of real-time neural rendering at this scale.

For context, the RTX 5090 is expected to be NVIDIA’s flagship GPU for 2026, featuring cutting-edge Tensor Cores optimized for AI workloads and massive VRAM capacity to handle the memory-intensive nature of neural networks. However, even this high-end hardware required a dual-GPU configuration to achieve stable frame rates during the demos, underscoring the early-stage nature of the technology. NVIDIA has since clarified that these demonstrations were intended to showcase the upper bounds of DLSS 5’s capabilities rather than reflect its optimized, consumer-ready performance.

NVIDIA’s Claims: Single-GPU Optimization by Launch

Despite the resource-intensive nature of the early demos, NVIDIA has asserted that the final version of DLSS 5 will be optimized to run efficiently on a single RTX 50 Series GPU. The company’s engineers are reportedly refining the neural rendering model to reduce memory bandwidth usage, minimize latency, and improve overall performance. While NVIDIA has not yet released official hardware requirements, industry analysts speculate that DLSS 5 will be compatible with mid-range RTX 50 GPUs, though the most demanding scenes may still require high-end models like the 5090 or 5080.

It is also worth noting that DLSS 5 is designed to integrate seamlessly with NVIDIA’s existing technologies, including the Streamline framework. Streamline is a cross-vendor framework that simplifies the integration of temporal upscaling and frame generation technologies across different GPU architectures and game engines. By leveraging Streamline, NVIDIA aims to ensure that DLSS 5 can be adopted quickly by developers without requiring extensive modifications to their engines or pipelines.

Game Support and Integration: Which Titles Will Benefit First?

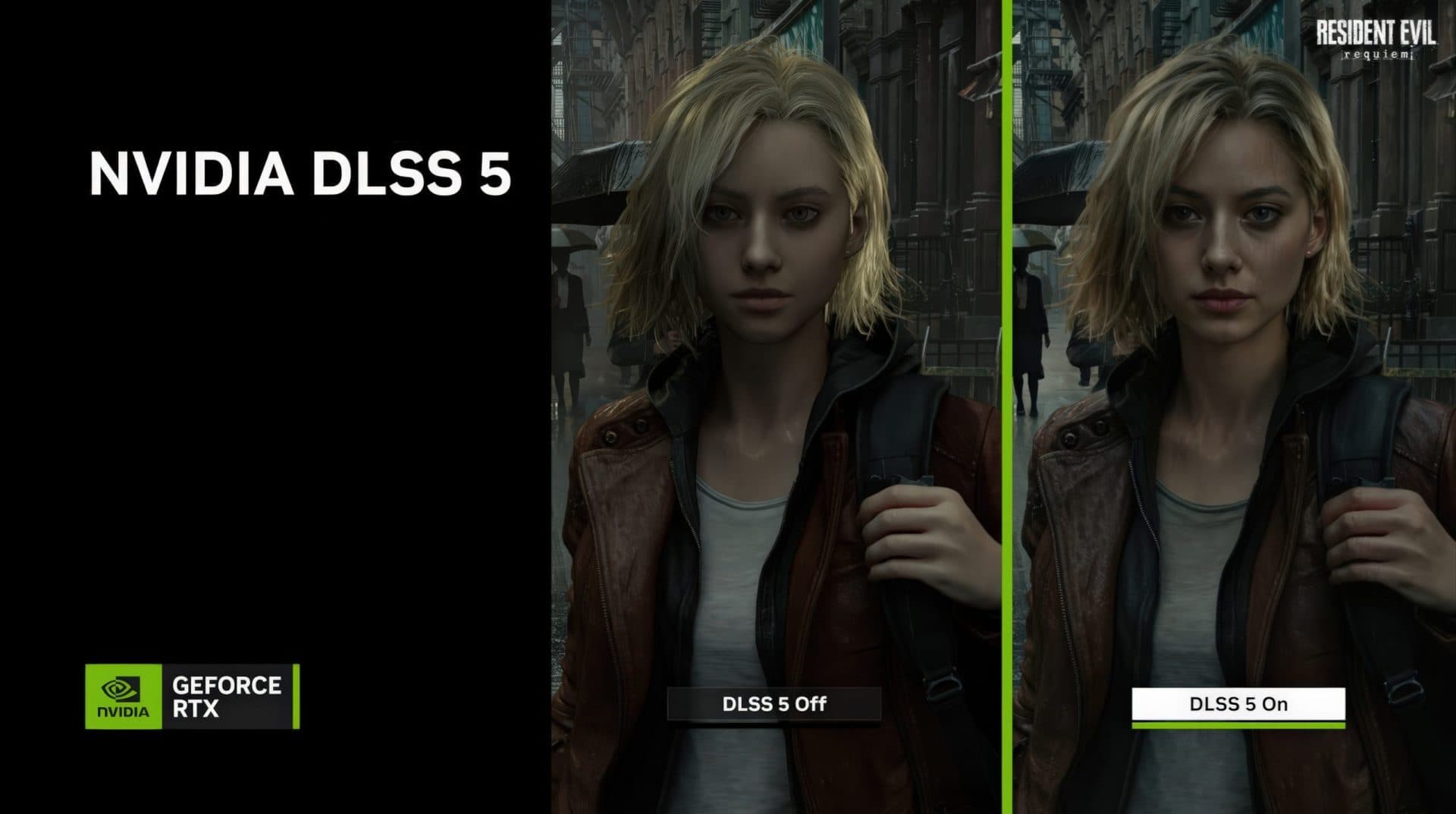

NVIDIA has indicated that DLSS 5 will be supported by major game publishers and studios, with a first wave of titles already announced. These include highly anticipated releases such as *Starfield*, *Hogwarts Legacy*, and *Resident Evil Requiem*, as well as upcoming blockbusters from Ubisoft, EA, and other industry giants. The integration process will involve close collaboration between NVIDIA’s engineering teams and game developers to ensure that the neural rendering model is tailored to each game’s unique visual style and technical requirements.

For developers, integrating DLSS 5 will likely require updates to their game engines and shaders, as well as the implementation of the Streamline framework. NVIDIA has emphasized that the technology is designed to be flexible and adaptable, allowing developers to fine-tune the neural rendering effects to match their artistic vision. This level of customization is expected to accelerate adoption, as studios can integrate DLSS 5 without compromising their creative control.

The Broader Implications of DLSS 5: AI, Realism, and the Future of Gaming

DLSS 5 represents more than just a technological milestone for NVIDIA—it signals a broader shift in the gaming industry toward AI-driven realism. As real-time ray tracing becomes increasingly common, and as hardware capabilities continue to advance, the demand for photoreal graphics has never been higher. However, traditional rendering techniques struggle to deliver the level of detail and fidelity that players and developers aspire to. DLSS 5 addresses this gap by using AI to synthesize visual enhancements that would otherwise be impossible to achieve in real time.

This innovation could have far-reaching implications for the gaming ecosystem. For players, it promises a new era of immersion, with games that look and feel more lifelike than ever before. For developers, it offers a powerful tool to elevate their visual storytelling without sacrificing performance or requiring excessive hardware investments. For the industry as a whole, DLSS 5 could accelerate the adoption of advanced rendering techniques, such as path tracing and global illumination, by making them more accessible and practical in real-time applications.

Moreover, DLSS 5’s emphasis on deterministic and temporally stable output addresses longstanding concerns about AI-enhanced graphics, such as flickering, artifacts, and inconsistencies. By grounding the neural rendering process in the game’s 3D content, NVIDIA ensures that the enhancements remain true to the developer’s original vision, preserving the artistic integrity of the game.

Challenges and Considerations: Will DLSS 5 Live Up to the Hype?

While the potential of DLSS 5 is undeniable, several challenges and considerations remain. First and foremost is the question of hardware requirements. Even with NVIDIA’s claims of single-GPU optimization, the computational demands of real-time neural rendering are significant. Players with mid-range or older GPUs may struggle to run DLSS 5 at high settings, potentially limiting its accessibility. Additionally, the memory requirements of neural networks could pose challenges for games with large, open worlds or complex scenes, necessitating careful optimization by developers.

Another consideration is the impact of DLSS 5 on latency. Real-time AI processing introduces additional computational overhead, which could increase input lag if not properly managed. NVIDIA has addressed this concern by integrating DLSS 5 with its Reflex technology, which is designed to reduce latency in competitive gaming scenarios. However, the effectiveness of this integration will depend on the final implementation and hardware support.

Finally, there is the question of adoption. While NVIDIA has secured support from major publishers, the success of DLSS 5 will ultimately depend on its seamless integration into existing workflows and its ability to deliver consistent, high-quality results across a wide range of games and hardware configurations. Developers will need to invest time and resources into optimizing their titles for DLSS 5, and players will need to upgrade their hardware to take full advantage of the technology.

What’s Next for NVIDIA and the Future of Neural Rendering?

DLSS 5 is just one step in NVIDIA’s long-term vision for AI-driven graphics. The company has hinted at further advancements in neural rendering, including the potential for even more sophisticated AI models that can predict and synthesize complex visual phenomena in real time. As hardware continues to evolve, and as AI techniques become more advanced, the line between real-time and offline rendering will continue to blur, opening up new possibilities for game developers and players alike.

In the meantime, the gaming community will eagerly await the Fall 2026 launch of DLSS 5, as well as the first wave of titles that will showcase its capabilities. With NVIDIA’s track record of innovation and its deep integration into the PC gaming ecosystem, DLSS 5 has the potential to redefine the standards for visual fidelity in real-time graphics.

Frequently Asked Questions

- What GPUs will support DLSS 5 at launch?

- NVIDIA has not yet released an official compatibility list, but DLSS 5 is expected to be supported by the RTX 50 Series GPUs, with higher-end models like the RTX 5090 and 5080 likely offering the best performance. Mid-range RTX 50 GPUs may also receive support, though demanding scenes may require more powerful hardware.

- Will DLSS 5 work with older RTX GPUs like the RTX 40 Series?

- NVIDIA has not provided details on backward compatibility, but given the significant hardware demands of DLSS 5, it is unlikely to be supported on older GPUs like the RTX 40 Series. The technology is designed to leverage the advanced Tensor Cores and AI acceleration features of the RTX 50 Series.

- How will DLSS 5 affect game performance and frame rates?

- DLSS 5 is not primarily designed to boost performance like previous DLSS versions; instead, it enhances visual fidelity. Early demos showed significant computational overhead, but NVIDIA claims the final version will be optimized for single-GPU efficiency. Performance impact will vary by game and hardware configuration.